The free pass is over. For years, companies like Meta, Google, TikTok, and Snapchat operated under a digital shield that felt nearly impenetrable. They argued they were merely the "pipes" for content, not the architects of a mental health crisis. That argument is dying in real-time. Recent court rulings in the United States have cracked the foundation of Section 230, the law that long protected tech platforms from being sued for what users post.

You've probably heard the talking points before. Tech lawyers love to say that holding them responsible for an algorithm is the same as suing a bookstore for the contents of a novel. It's a clever analogy. It's also wrong. A bookstore doesn't follow you home, whisper in your ear, and hand you a book on self-harm because it noticed you looked sad near the poetry section. Algorithms are active, not passive. Judges are finally starting to see the difference between hosting content and aggressively pushing it into the hands of vulnerable kids.

Why the Legal Tide is Turning

Two specific verdicts have sent shockwaves through Silicon Valley. We aren't just talking about abstract fines anymore. We're talking about the right to sue. The most significant shift comes from cases where judges ruled that the design of the platform itself—the addictive features, the lack of age verification, and the predatory notification loops—is a product, not just speech.

When a product is defective and causes harm, you can sue the manufacturer. If a car's brakes fail, Ford can't claim "freedom of expression." By reframing social media addiction as a "product liability" issue rather than a "content moderation" issue, lawyers found the chink in the armor. It's a brilliant move. It bypasses the First Amendment arguments that have stalled progress for a decade.

The Algorithmic Push to the Edge

Meta and TikTok don't just host videos. They curate an experience designed to keep eyes glued to the screen for as long as humanly possible. This is where the harm happens. Research from groups like the Center for Countering Digital Hate has shown how quickly a new account, set up as a teenager, can be served content promoting eating disorders or extreme violence.

TikTok’s "For You" page is the perfect example. It's a high-speed feedback loop. It learns your weaknesses faster than you do. If a child lingers on a depressing video for three seconds too long, the algorithm takes that as a command. Give them more. In a courtroom, this looks less like "neutral hosting" and more like "intentional endangerment."

Snapchat and the Ghost of Accountability

Snapchat often flies under the radar compared to the giants, but it's facing its own reckoning. The platform's features, like the "Snap Map" and disappearing messages, have been cited in numerous lawsuits involving the sale of fentanyl to minors and online grooming. Parents argue these features aren't accidental bugs. They're intentional design choices meant to create a sense of urgency and secrecy.

The legal argument here is simple. If you build a platform that facilitates illegal activity through its core design, you should be held responsible for the fallout. For years, Snapchat dodged these claims. Now, a California court has allowed a major lawsuit to proceed, basically saying that Snapchat's design might actually be "negligent." That's a huge word in a courtroom. It opens the door for discovery, which means tech executives might have to hand over internal emails showing they knew about these risks.

A Guerilla War in the Courts

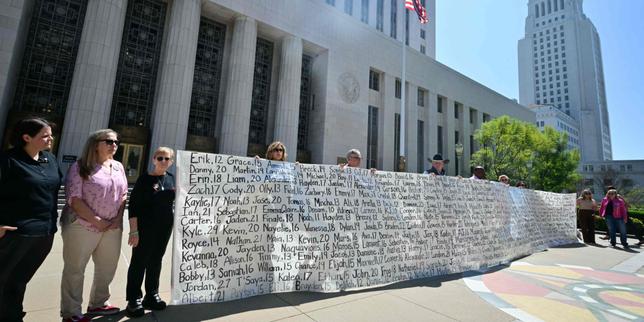

We're entering a phase of "judicial guerrilla warfare." Instead of waiting for a divided Congress to pass new laws, hundreds of school districts and thousands of parents are filing individual and class-action lawsuits. It’s a strategy of attrition. Even if the tech giants win some of these cases, the sheer volume of litigation is exhausting.

Every single case that survives a "motion to dismiss" is a win for the plaintiffs. Why? Because it leads to discovery. We saw this with the tobacco industry in the 90s. The industry was untouchable until internal memos proved they knew cigarettes were addictive and marketed them to kids anyway. The "Facebook Files" leaked by Frances Haugen gave us a glimpse into this, but a court-ordered discovery process would be much deeper. It would be a nightmare for Meta’s legal team.

The Big Four and Their Defenses

Don't expect Google or Meta to fold overnight. They have the best lawyers money can buy, and their defense is still rooted in the idea of a "free and open internet." They claim that if they're held liable for every interaction, they'll have to censor everything. It’s a scare tactic.

They also point to their safety tools. Meta likes to brag about its "Family Center" and time-limit features. But let's be real. These tools are often buried deep in settings menus and are easily bypassed by any tech-savvy thirteen-year-old. They're more about PR than protection. They want to show the judge they "tried," even if the effort was half-hearted.

What This Means for Your Family

If you’re a parent, the legal landscape is finally starting to reflect the reality you deal with every day. The myth that "it’s up to the parents to monitor everything" is losing steam. It’s impossible for a parent to compete with a thousand engineers whose job is to make an app more addictive than a slot machine.

The courts are acknowledging that there is a power imbalance. You aren't just fighting an app; you're fighting a multi-billion dollar behavioral science experiment. The shift toward product liability means that, in the near future, we might see mandatory safety features that actually work—like hard age gates and the complete removal of engagement-based algorithms for minors.

Moving Toward a Safer Digital Space

The immediate future is messy. Expect more headlines about massive settlements and landmark rulings. The goal isn't just to get a payout; it’s to force a change in how these platforms are built from the ground up.

If you want to protect your kids while the courts catch up, you have to be proactive. Don't wait for a "verdict" to change your home habits.

- Disable the "For You" or "Explore" feeds where possible. Stick to accounts you actually follow.

- Set boundaries at the router level. Using hardware to cut off access at 9 PM is much more effective than an app-based timer.

- Talk about the "Casino Effect." Explain to your kids how these apps use variable rewards to keep them scrolling. Knowledge is a decent shield.

- Support legislation like the Kids Online Safety Act (KOSA). Litigation is great, but federal law provides the ultimate floor for safety standards.

The era of the "wild west" for social media is ending. It’s not happening through a single law, but through a thousand cuts in courtrooms across the country. Big Tech is finally being treated like every other industry. They’re responsible for the harm they cause.