Western media loves a "falling sky" narrative, especially when it involves aging Russian hardware. The moment a Kurs-NA antenna fails to deploy or a sensor glitches on a Soyuz or Progress craft, the headlines scream about "technical crises" and "forced manual interventions." They paint a picture of a decaying space program held together by duct tape and Soviet-era prayers.

They are missing the point entirely.

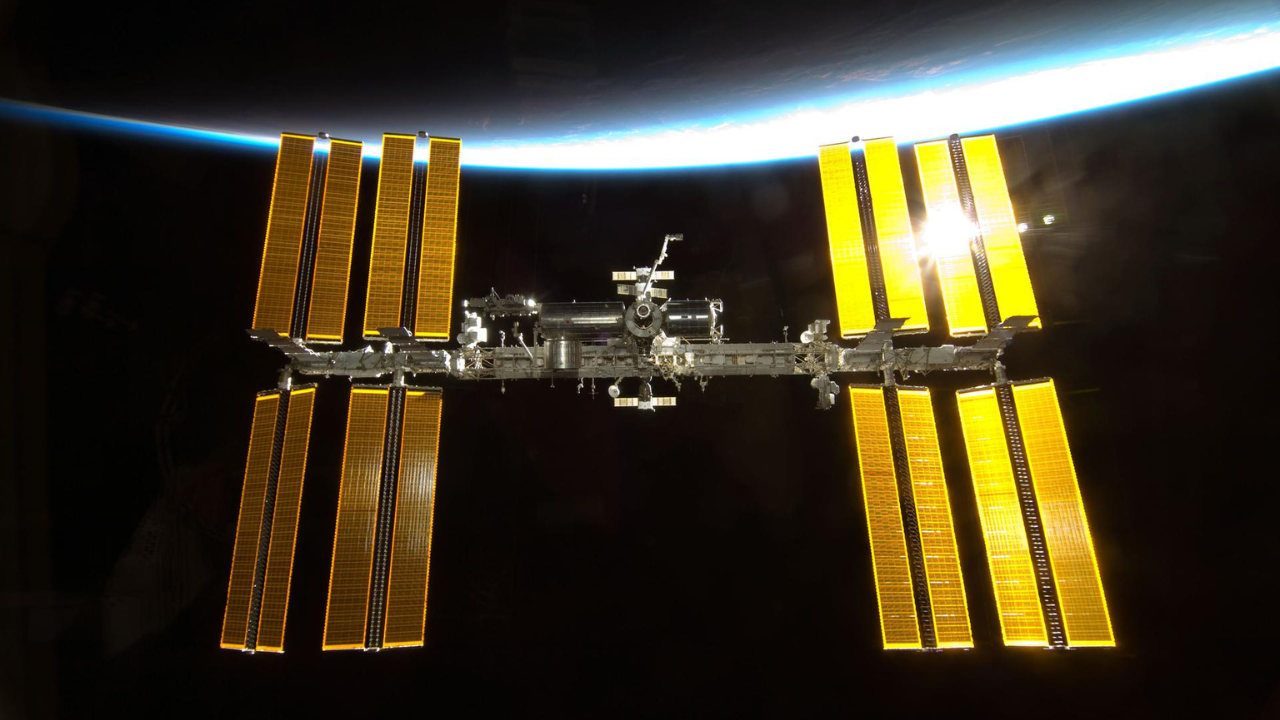

The recent reported "antenna problem" requiring a manual docking at the International Space Station (ISS) isn't a sign of weakness. It is a masterclass in operational redundancy that NASA—buried under layers of mission-assurance bureaucracy and code-dependent automation—has largely forgotten how to value. While we obsess over the failure of the robot, we ignore the absolute triumph of the pilot.

The Fetishization of Automation

We have entered an era where we trust a line of code more than a human hand. In the West, if the automated docking system on a Dragon or a Starliner fails, the mission profile usually shifts toward an abort or a massive delay while ground control runs simulations. We treat manual intervention as a catastrophic backup, a "break glass in case of emergency" scenario that suggests the mission has already failed.

Roscosmos views the world differently. To them, the automation is a convenience. The pilot is the system.

When an antenna fails to lock, the Russian cosmonaut doesn't panic. They don't wait for a software patch to be beamed up from Earth. They take the stick. This isn't a "forced" docking; it is the execution of a primary skill set. The obsession with 100% automated uptime is a luxury of those who haven't spent decades operating on the razor's edge of tight budgets and harsh environments.

The Kurs System Logic

Let’s talk about the hardware. The Kurs automated docking system is a radar-based marvel that has been around since the 1980s. Yes, it’s old. Yes, the newer Kurs-NA is meant to be more efficient, lighter, and less power-hungry. But the Western critique—that "old means broken"—ignores the fundamental physics of orbital mechanics.

Docking two objects moving at $7.66 \text{ km/s}$ is not about having the flashiest UI. It is about relative velocity and vector alignment.

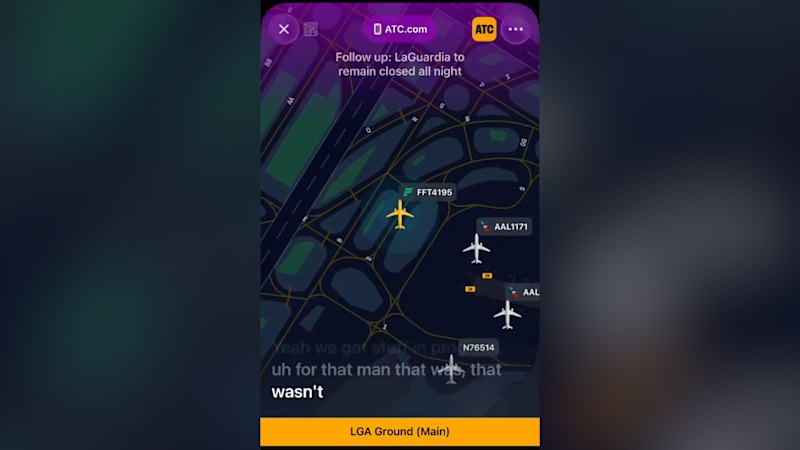

The Kurs system uses a series of antennas to calculate the line-of-sight range and the rate of approach. If one of those antennas fails to deploy, the system loses its "vision." In a digital-first culture, losing your sensor data is a death sentence for the mission. In the Russian school of engineering, you simply switch to the TORU (Teleoperatored Control Mode).

Why TORU is Superior to Autonomy

- Cognitive Flexibility: A sensor can be fooled by sunlight reflections or "glint." A human eye looking through a crosshair cannot.

- Zero Latency: When a pilot is in the seat, the feedback loop is instantaneous. There is no "processing time" for a central computer to decide if the data is valid.

- Hardware Simplicity: The manual backup requires fewer moving parts and less power.

I have spoken with engineers who have watched these manual dockings from the inside. They don't see it as a failure of the Kurs; they see it as the ultimate validation of the Soyuz design. The ship is built to be flown, not just ridden.

The Cost of the "Perfect" Mission

NASA’s approach to docking is built on the premise that failure is not an option. This sounds noble, but it leads to "gold-plating"—adding so much redundant electronic complexity that the system becomes brittle. If a sensor fails on a modern Western craft, the entire logic gate often shuts down because the computer no longer "trusts" the environment.

The Russian philosophy accepts that space is a garbage fire of radiation, extreme thermal cycles, and mechanical stress. They expect the antenna to fail. They expect the sensor to glitch. By building the mission around the human’s ability to take over, they create a more resilient system than any "seamless" automated interface could ever provide.

Imagine a scenario where a deep-space mission to Mars encounters a localized electromagnetic pulse or a micro-meteoroid strike that fries the primary docking sensors. Which crew do you want? The one that has spent ten years practicing "supervising" a computer, or the cosmonaut who has docked a 7-ton tin can manually because the antenna didn't feel like opening that day?

The Media’s Fundamental Misunderstanding of Risk

Every time a Progress resupply vehicle has an issue, the "industry experts" come out of the woodwork to talk about the "decline of Russian space power."

This is a lazy take.

Russian space hardware is built for high-cadence, low-cost access to orbit. When you fly as often as they do, you see more anomalies. Statistics dictate it. The fact that these anomalies result in successful dockings rather than mission losses is a testament to the robustness (and I use that word in its literal, mechanical sense) of their operational philosophy.

The real risk isn't an antenna that won't deploy. The real risk is a pilot who doesn't know what to do when the screen goes blank.

Stop Asking if the Hardware is Failing

The question isn't "Why did the antenna fail?" The question you should be asking is: "Why are we so afraid of manual control?"

We’ve become addicted to the "black box" approach to aerospace. We want to push a button and watch the magic happen. But the International Space Station is an aging laboratory in a vacuum. It is a miracle that anything works at all.

When the antenna fails, the cosmonaut is doing more than just parking a spacecraft. They are asserting human dominance over a hostile environment. They are proving that the most sophisticated computer in the solar system is the three-pound lump of grey matter sitting between their ears.

The Tactical Advantage of the Glitch

There is a final, cynical layer to this that the "status quo" analysts never touch. Every "failure" of an automated system that is corrected by a human provides invaluable data that a perfect mission never could.

Russian mission control (TsUP) now has decades of data on how humans perform under the stress of manual docking in non-nominal conditions. NASA has significantly less. In a long-term survival scenario—say, a lunar base or a Mars transit—that data is worth more than a thousand successful automated dockings.

The "problem" NASA reported wasn't a problem. It was a training exercise that the West didn't even realize it was missing.

Stop looking for "seamless" perfection. Perfection is a lie told by marketing departments. In the real world—the world of cold vacuum and orbital debris—you want the pilot who can fly the ship when the "cutting-edge" sensors decide to quit.

The antenna didn't fail the mission. It gave the pilot a chance to prove why they were sent there in the first place.

Take the stick or get out of the cockpit.