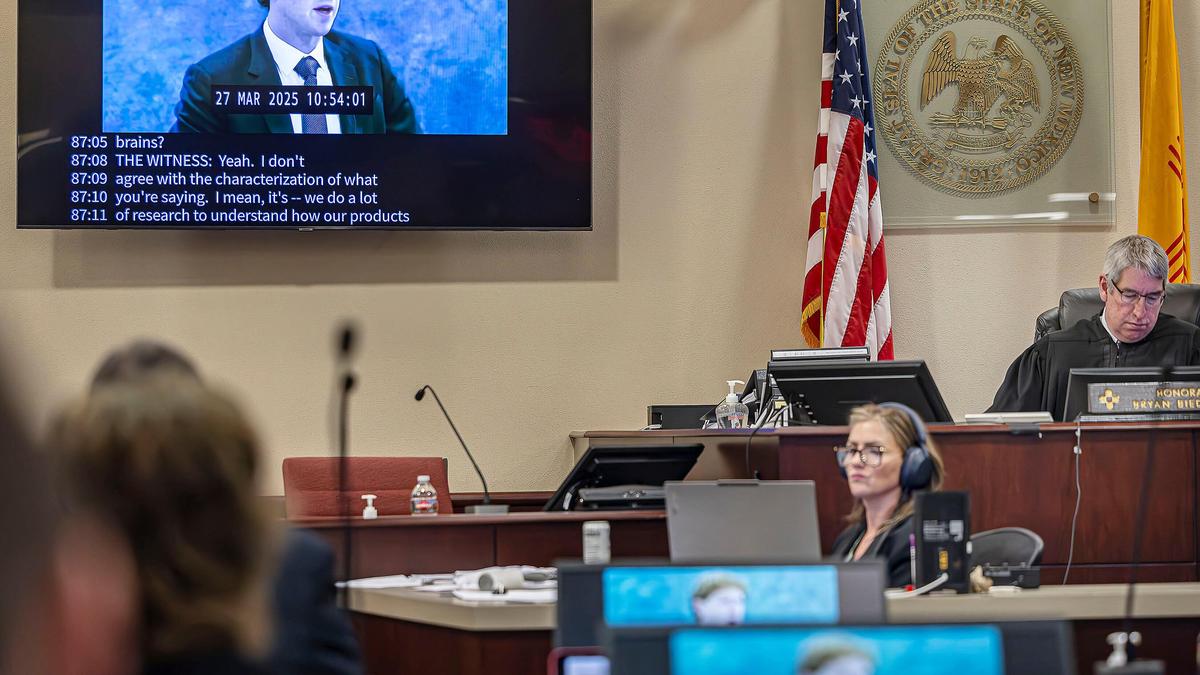

A New Mexico jury recently delivered a verdict that stripped away the carefully constructed legal shield Meta has spent a decade welding into place. By finding that the social media giant knowingly harmed children’s mental health and violated state consumer protection laws, the court moved past the abstract debate of whether "the internet is bad" and into the granular reality of product liability. This wasn't a slap on the wrist for a vague social ill. It was a forensic takedown of specific engagement features designed to bypass the undeveloped impulse control of the adolescent brain.

For years, the tech industry relied on Section 230 and the defense that they were merely neutral platforms. This verdict signals that the "neutral platform" era is dead. When a system is engineered to prioritize retention over safety, and that engineering leads to documented psychological injury, it is no longer a platform. It is a defective product.

The Architecture of Compulsion

To understand why a jury in New Mexico turned on one of the world's most powerful companies, you have to look at the plumbing of Instagram and Facebook. We are talking about the Variable Reward Schedule. This is the same psychological mechanism that makes slot machines addictive. When a child pulls down to refresh a feed, they don't know if they will see a "like" from a crush or a terrifying video of self-harm. That uncertainty triggers a dopamine spike.

In an adult brain, the prefrontal cortex acts as a brake, reminding the individual that they have work to do or need to sleep. In a thirteen-year-old, that brake hasn't finished its assembly. Meta’s internal documents, leaked by whistleblowers and unearthed in discovery, show the company knew its "rabbit hole" algorithms were funneling vulnerable teens toward increasingly toxic content. They didn't just allow it; they optimized for it because toxic content generates more "watch time" than healthy content.

The jury saw evidence that Meta’s engineering teams ignored internal warnings about features like "infinite scroll" and "beauty filters." These aren't just cosmetic choices. They are tools of psychological friction. By removing the natural stopping points of an experience—like the end of a page or a chapter—Meta ensured that a child’s session only ends when external exhaustion or parental intervention occurs.

The State as the New Regulator

Washington D.C. has spent years grandstanding in congressional hearings without passing a single meaningful law to protect minors online. Federal gridlock created a vacuum. New Mexico’s Attorney General filled it. By using state-level Unfair Practices Acts, prosecutors found a backdoor to hold Big Tech accountable without needing a divided Congress to agree on a new federal digital code.

This strategy treats social media harm as a matter of consumer protection rather than free speech. If a toy manufacturer sells a doll that contains lead paint, they can't claim "free expression" as a defense. The New Mexico verdict proves that "lead paint" in the digital age is an algorithm that promotes eating disorders to teenage girls.

We are seeing a shift in how legal departments at these firms evaluate risk. Previously, the cost of a few lawsuits was seen as a line item in a multi-billion dollar budget—a "tax" on doing business. But a jury verdict changes the math. It creates a precedent that other states, from California to Florida, are already following. When the liability for a "defective" algorithm starts to outweigh the ad revenue it generates, the engineering will change. Not because of a moral awakening, but because of the bottom line.

Beyond the Screen

The industry often tries to shift the blame to parents. They argue that "parental supervision tools" are the solution. This is a deflection. Expecting a parent to manually filter billions of data points processed by a supercomputer is like asking someone to stop a flood with a teaspoon. The power imbalance is total.

Meta’s defense usually centers on the idea that social media provides "connection." Yet, the evidence presented in these cases often shows the opposite: Social isolation masquerading as digital intimacy. The jury focused on the gap between what Meta promised—a way to bring people closer—and what it delivered—a feedback loop that exacerbated anxiety and depression.

The Problem with Self-Regulation

Meta's "Safety and Integrity" teams have often been the first to be cut during "years of efficiency" and mass layoffs. This sends a clear signal to the market and the courts. If a company claims it can police itself but then fires the police to boost quarterly margins, it loses the benefit of the doubt. The New Mexico verdict is a direct response to this perceived corporate cynicism.

We must also consider the Feedback Loop of Toxicity. Algorithms are trained to show you more of what you linger on. If a depressed teenager lingers on a video about sadness, the machine interprets that as "interest" and floods their feed with similar content. It is a machine-learning system that lacks a moral compass, and the humans in charge of it have historically refused to install one if it meant a 1 percent drop in engagement.

The Looming Financial Reckoning

Investors are starting to wake up to the reality that Meta’s core demographic—the next generation of users—is becoming its biggest legal liability. The New Mexico case is just one of hundreds of "Social Media Addiction" lawsuits bundled in multi-district litigation (MDL). This isn't a single fire; it's a forest fire.

If this trend continues, we will see a "Big Tobacco moment" for social media. For decades, tobacco companies claimed their products were safe or that the risk was the smoker's choice. Eventually, the weight of internal documents proving they knew about the harm—and targeted youth anyway—toppled them. Meta is currently in the "denial and deflection" phase of that cycle.

$$Risk = (Probability of Harm) \times (Total Potential Damages)$$

When the probability of harm is "guaranteed" by the company’s own internal research, and the damages are multiplied by millions of users, the risk becomes existential. Meta’s current valuation does not fully reflect the potential for a massive, multi-state settlement that could reach the hundreds of billions.

The Shift to Product Safety Standards

What does a "safe" Instagram actually look like? It looks like a platform where the algorithm doesn't use "engagement" as its only metric. It means turning off algorithmic recommendations for minors by default. It means a hard limit on the number of notifications a child receives in a day. It means the end of the infinite scroll.

The tech industry will argue this will "break the internet." It won't. It will just break their current business model. We have seen this before. When cars were first mass-produced, they didn't have seatbelts or crumple zones. The industry fought those regulations too, claiming they were too expensive and would kill the automotive business. Instead, it made cars safer, and the industry thrived.

The New Mexico jury has effectively handed Meta a blueprint for its own survival, though the company may not see it that way yet. They have defined the boundaries of acceptable corporate behavior in the digital sphere. The "move fast and break things" era has finally hit a wall, and that wall is the mental health of an entire generation.

You cannot "patch" a culture of negligence with a few new privacy settings. You have to rebuild the engine. If Meta refuses to do so, the courts will eventually do it for them, one jury verdict at a time. The real question is how many more states will follow New Mexico's lead before the board of directors realizes that their current path is no longer profitable.

Demand that your state representatives move beyond "digital literacy" programs and start funding the litigation necessary to force these companies into a safety-first engineering model.