A mother in Tooele, Utah, recently discovered her elementary-aged son playing a game titled "Five Nights at Epstein" on a school-issued Chromebook. This was not a dark-web hack or a sophisticated breach of state secrets. It was a user-generated modification hosted on a popular gaming site that bypassed the district’s content filters. While the school district scrambled to patch the hole, the incident highlights a massive, systemic failure in how educational institutions police the software they hand to children.

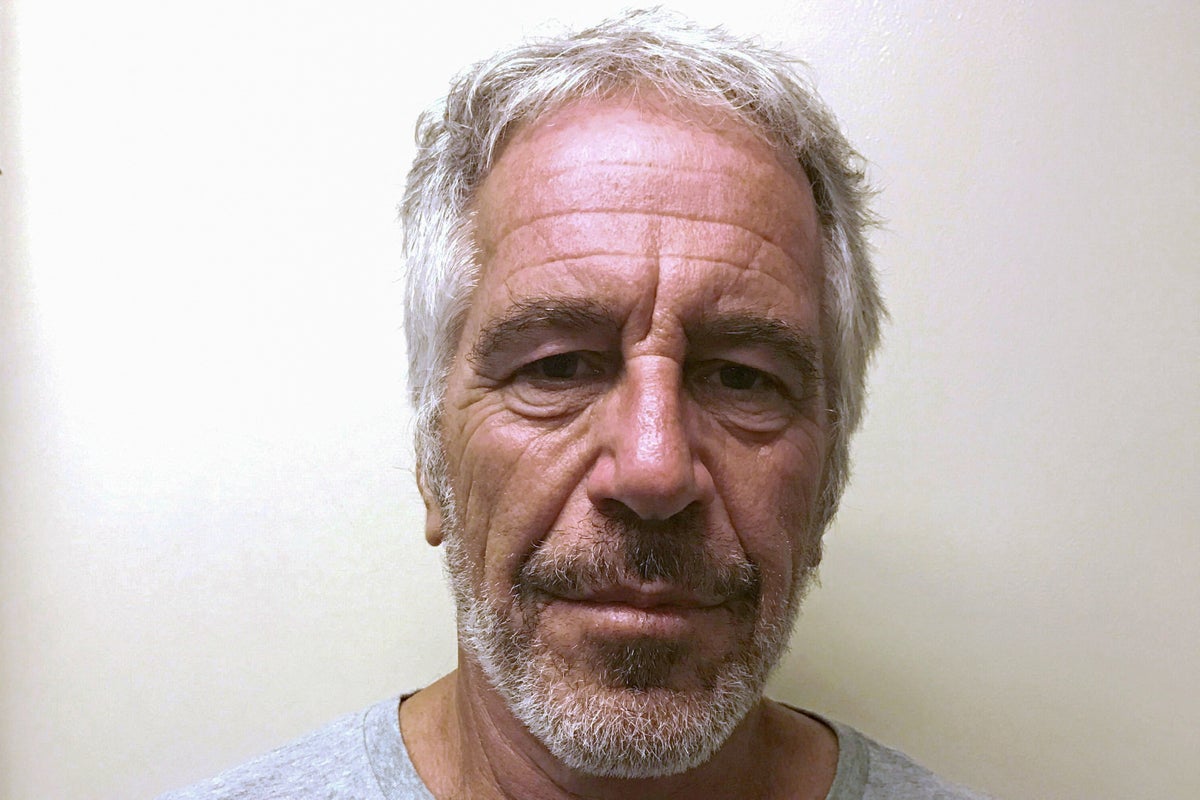

The game, a twisted parody of the popular Five Nights at Freddy's franchise, swaps out the animatronic jump-scares for imagery of Jeffrey Epstein. It is crude. It is disturbing. And it is exactly the kind of content that thrives in the blind spots of modern classroom technology.

The Myth of the Ironclad Firewall

School districts spend millions on "Content Filtering Solutions" like GoGuardian, Securly, or Gaggle. These platforms promise parents a walled garden where children can research history without stumbling into the gutters of the internet. The reality is far messier. These filters primarily work on a blacklist or whitelist system, blocking known "bad" URLs or keywords.

The problem? The modern web is built on dynamic, user-generated platforms.

Sites like Roblox, Scratch, and various "unblocked games" repositories host millions of individual files. A filter might allow a site because it is categorized as "educational" or "gaming," but it cannot possibly scan every line of code or every texture file within a user-made project. "Five Nights at Epstein" didn't exist as a standalone website. It existed as a "mod" or a project on a platform that the district likely deemed acceptable for recreational use during breaks.

When a filter sees a student accessing a domain like itch.io or a mirror site, it sees a valid connection. It doesn't see the specific, horrific content being rendered on the screen. This is a structural flaw in the "set it and forget it" mentality of school IT departments.

Why Gamification is Backfiring on Students

For the last decade, the education industry has pushed "gamification" as the silver bullet for student engagement. The logic was simple: kids like games, so if we make school feel like a game, they will learn more. This led to the mass adoption of Chromebooks and the integration of gaming platforms into the curriculum.

We have now reached the hangover stage of this experiment.

By turning the primary learning tool into a gaming console, schools have blurred the lines between work and play. Students have become incredibly adept at finding "backdoors." They use Google Translate as a proxy to browse blocked sites. They use "unblocked" mirrors hosted on GitHub. They find games buried within educational apps. The "Five Nights at Epstein" incident is just a high-profile example of a daily reality in almost every American middle school.

The educators are outmatched. A teacher managing twenty-five students cannot see every screen at every second. They rely on the software to be the chaperone, but the software is reactive, not proactive. It waits for a site to be reported before it blocks it. By then, the damage is done.

The Content Pipeline from Meme to Trauma

There is a specific, nihilistic subculture on the internet that finds humor in the grotesque. These creators know that children are their primary audience. By taking a brand kids already love—like Five Nights at Freddy's—and layering in references to real-world predators, they create a "forbidden fruit" effect.

It is a form of digital vandalism.

To a ten-year-old, the name "Epstein" might just be a meme they’ve heard on TikTok, associated with "the island" or "the list." They don't have the developmental capacity to understand the gravity of the crimes involved. The creators of these games exploit this ignorance, using shock value to gain "clout" or views. When this content reaches a classroom, it isn't just a distraction; it’s an intrusion of the most cynical corners of the internet into what should be a protected space.

The Liability Vacuum

Who is responsible when a child views Jeffrey Epstein-themed content on a taxpayer-funded device?

- The District blames the software provider for a "technical oversight."

- The Software Provider points to the "Terms of Service" which state that no filter is 100% effective.

- The Hosting Platform claims they are a "neutral carrier" protected by Section 230.

- The Teacher is already overwhelmed by a bloated curriculum and a lack of support.

The parent is left holding the bill for the therapy. This circle of finger-pointing ensures that no one actually fixes the root cause. The root cause is the over-reliance on automated systems to perform the moral and ethical task of supervision.

Hardware is Not a Babysitter

We have treated the 1:1 laptop initiative (one laptop for every student) as a mark of progress. In many ways, it has been a regression. It has isolated students behind screens, making their internal worlds harder for adults to monitor.

The Utah incident should be a catalyst for a "Zero-Trust" approach to educational hardware.

- Strict Whitelisting: Instead of blocking the bad, schools should only allow the good. If a site hasn't been manually vetted by a human being, it should be inaccessible. This is more work, but it is the only way to prevent "Epstein" games from appearing.

- Removal of Non-Essential Platforms: There is no pedagogical reason for a 10-year-old to have access to open-source gaming repositories on a school device. If it isn't required for the lesson, the port should be closed.

- Physical Monitoring: No amount of software replaces a teacher walking the rows. We need to move away from the "screens-down" model of learning where the device is the center of the room.

The Tooele County School District issued a statement saying they have "updated their filters." This is a band-aid on a gunshot wound. Tomorrow, a different game with a different name will appear on a different site, and the cycle will repeat.

We are handing children the keys to the entire world, including its darkest alleys, and then acting surprised when they find the door to a monster's house. It is time to stop pretending that an algorithm can raise a child.

Demand a full audit of your child’s school device "allowed list" and ask specifically how they handle user-generated content platforms.