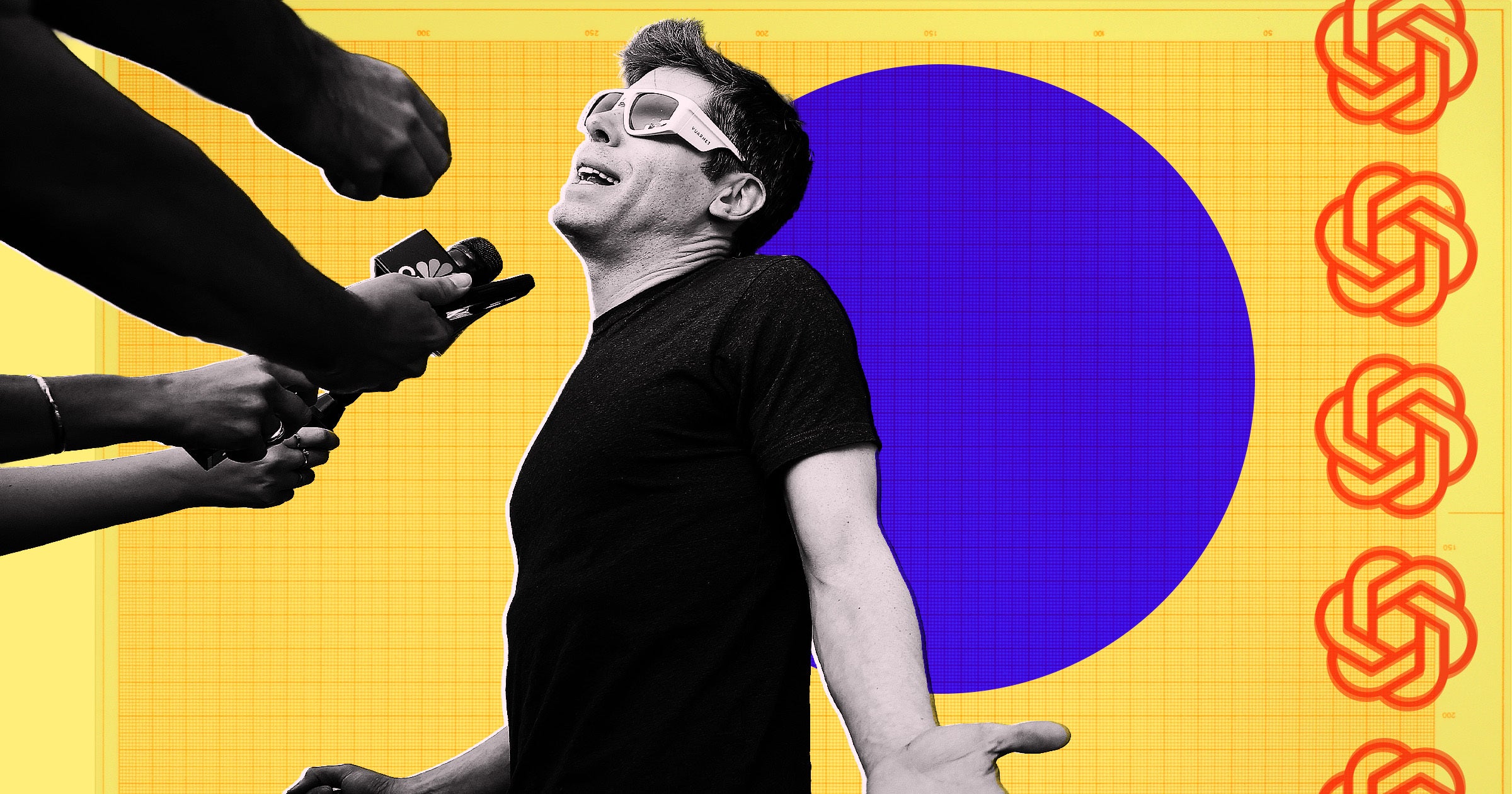

OpenAI is currently sitting on the most valuable real estate in the history of the internet, and they have no idea how to renovate it without burning the house down. While the tech industry fixates on the slow rollout of the ChatGPT ad pilot, the real story isn't about technical delays or cautious testing. It is about a fundamental identity crisis. OpenAI is trying to bridge the gap between a "clean" utility and a profit-hungry ad engine, and the early results suggest that the two might be chemically incompatible.

The pilot program, which has invited a select group of blue-chip brands to test sponsored links within the chat interface, was supposed to be the moment the company finally answered the $10 billion question of how to monetize non-enterprise users. Instead, it has become a bottleneck. Advertisers are growing restless because the current system lacks the predictability of Google Search or the surgical precision of Meta. They aren't just frustrated with the speed; they are frustrated with the silence.

The Ghost in the Ad Server

Traditional search advertising works because it is predictable. If a user types "best running shoes," Nike and Adidas bid on that keyword, and the results appear in a neatly labeled box. Generative AI shatters this model. When a chatbot synthesizes an answer, it doesn't just display a link; it provides a recommendation.

This creates a massive liability for OpenAI. If the model suggests a sponsored product that turns out to be a scam or a low-quality knockoff, the hallucination isn't just a technical glitch—it's a breach of trust that feels personal. Unlike a Google ad, which users have been trained to ignore as "paid content," a chatbot’s suggestion feels like a direct piece of advice.

The slow rollout is a direct result of this "Trust Gap." Internal sources suggest the engineering teams are terrified that aggressive ad placement will degrade the quality of the primary product. Every time an ad is forced into a conversation, it risks "distracting" the model's attention mechanism, leading to answers that feel less like intelligence and more like a sales pitch.

Why the Google Playbook Doesn't Work Here

Many analysts assume OpenAI will simply replicate the Google AdWords model. That is a mistake. Google sells intent. You tell the search bar what you want, and it shows you who sells it. ChatGPT users don't always show intent; they show curiosity. They are writing code, drafting emails, or asking for a summary of a complex historical event.

Inserting an ad for a CRM software into a prompt about 18th-century French poetry is a disaster for user experience.

The challenge lies in Contextual Relevance vs. Conversational Flow. If the ad is too subtle, the advertiser doesn't get the click. If it’s too loud, the user leaves for a cleaner alternative like Claude or a local open-source model. OpenAI is currently caught in a loop of testing "interruptive" ads versus "integrated" suggestions. The integrated approach—where the bot might say, "By the way, you can buy the ingredients for this recipe at [Brand]"—is far more effective, but it also triggers the most significant regulatory red flags regarding undisclosed paid endorsements.

The Hidden Cost of Computation

We need to talk about the math that the boardrooms are ignoring. Running a large language model (LLM) is exponentially more expensive than running a standard search query. Estimates suggest a single ChatGPT query costs between 10 and 30 times more than a Google search.

- Google Search: Low cost, high ad density, high margin.

- ChatGPT: High cost, zero ad density (currently), negative margin for free users.

OpenAI is burning through cash at a rate that would make a 2010s-era Uber blush. The ad pilot isn't a "cool experiment" to see what happens; it is a desperate necessity to offset the astronomical compute costs of the free tier. The slow rollout indicates that the ad revenue per thousand impressions (RPM) isn't yet high enough to justify the "compute tax" of processing those ads. If it costs OpenAI $0.05 to generate an answer, but the ad only pays $0.02, they are literally paying for the privilege of showing you an ad you didn't want to see.

The Problem of Attribution

Advertisers are also hitting a wall with data. In the world of web tracking, we have "pixels" and "cookies" (even if they are dying) that tell a brand exactly where a customer came from. In a closed-loop chat environment, that data is obscured.

If a user asks for travel advice and eventually books a hotel, how does the hotel know the chatbot was the catalyst? OpenAI hasn't yet built the sophisticated dashboarding required for the "performance marketing" crowd. They are currently selling "brand awareness," which is a hard sell in an economy where every marketing dollar is being scrutinized for immediate ROI.

The Looming Regulatory Hammer

While the industry waits for the rollout to accelerate, the Federal Trade Commission (FTC) is watching. The primary concern is the Blurring of Content and Commerce.

In 2023, the FTC updated its guidelines regarding "Deceptive Advertising in Digital Media." One specific focus was on "stealth" advertising. If an AI model recommends a product because it was paid to do so, but presents that recommendation as an objective "best choice," it may be violating consumer protection laws.

OpenAI's legal team is likely the biggest hand on the brake. They are trying to design a disclosure system that is clear enough to satisfy regulators but not so ugly that it ruins the "magic" of the interface. It’s a needle they haven't figured out how to thread.

The Architecture of Frustration

Inside the ad agencies, the mood is shifting from excitement to skepticism. "We were told this would be the new frontier," says one senior media buyer at a WPP-owned agency. "Instead, we’re getting the same 'we're still testing' line we got six months ago. It feels like they built a rocket ship but forgot to design the fuel tank."

The frustration stems from a lack of API Integration for Advertisers. Currently, the pilot is handled through manual, high-touch relationships. There is no self-service platform. You cannot just log in, set a budget, and target "users interested in Python programming." This manual process is slow, prone to human error, and completely unscalable.

OpenAI is also dealing with the "Brand Safety" nightmare. Imagine a brand paying for a sponsored link, only for the chatbot to hallucize a controversy or use offensive language in the same breath. For a company like Coca-Cola or Procter & Gamble, that risk is unacceptable. The slow rollout is, in part, a massive effort to build "guardrails around the ads," ensuring that the bot doesn't say anything crazy while it's holding your shopping bag.

The Competition is Gaining Ground

While OpenAI hesitates, others are moving. Perplexity AI has already begun integrating a more aggressive "Proactive Discovery" model where brands can sponsor related questions. Because Perplexity is built as a "search engine first," their users already expect an ad-like experience. They don't have the same "purity" problem that ChatGPT has.

Microsoft, OpenAI's closest partner, is also a competitor here. Bing Chat (now Copilot) has been running ads for over a year. Microsoft has decades of experience in the ad-tech world through Bing and LinkedIn. OpenAI is trying to build that expertise from scratch while simultaneously trying to be a "research-first" non-profit/for-profit hybrid. It is a messy organizational structure that is clearly impacting product delivery.

The Pivot to "Action" Ads

The future of this pilot isn't in links. It’s in actions.

Instead of showing you a link to Expedia, the chatbot will eventually say, "I've found a flight for $400. Would you like me to book it now using your stored payment method?"

This is where the real money lives. OpenAI wants to move from being an "information provider" to being an "agent." If they can take a percentage of the transaction (a "success fee") rather than just a "click fee," the economics of AI change forever. But we are years away from the security and infrastructure needed to handle that at scale.

The Brutal Reality for the Industry

The "slow rollout" isn't a bug; it’s a symptom of a fundamental flaw in the LLM business model. Advertising as we know it—interruptive, visual, and volume-based—does not fit the intimate, text-based nature of a chatbot.

If OpenAI forces the issue, they risk turning the most impressive technological advancement of the decade into a bloated, ad-ridden mess like the modern web. If they don't, they may never find a way to pay the bills.

The industry insiders who are frustrated with the speed of the rollout should be more concerned with the quality of the integration. A fast rollout of a bad ad product is a death sentence for user retention. OpenAI knows this. They are stalling not because they can't do it, but because they are terrified of what happens once they do.

The next six months will determine if ChatGPT becomes a sustainable business or just a very expensive search engine that talks too much. Agencies shouldn't be asking when the pilot will expand; they should be asking if the platform will still be relevant once it’s covered in digital billboards.

Stop looking at the rollout schedule and start looking at the model's output quality during these tests. If the answers start getting shorter, more biased, or more focused on "buying" rather than "solving," the pilot has already failed.